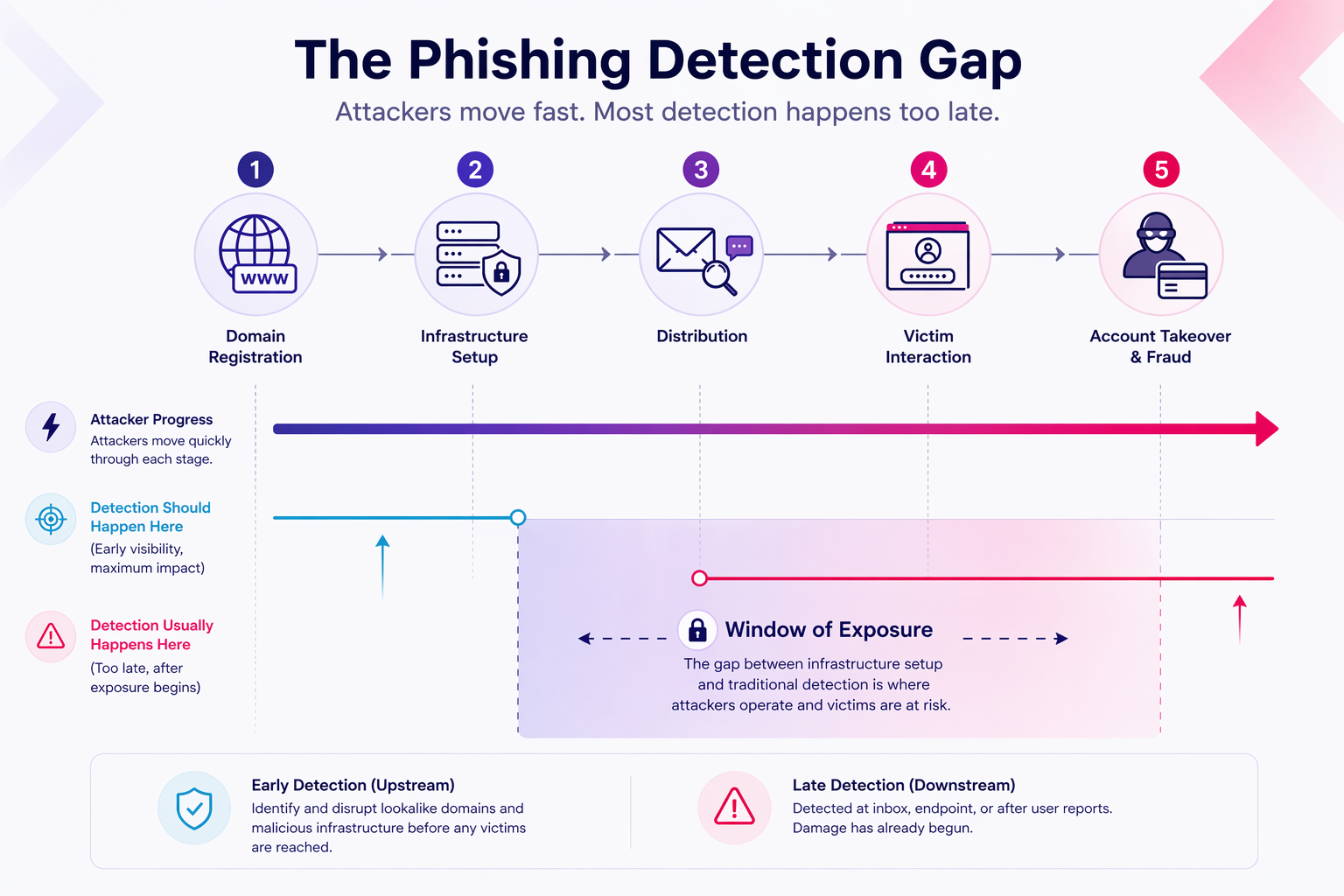

By the time a phishing email lands in an inbox, the attacker’s infrastructure has already been live for hours.

That’s not a hypothetical. Zimperium’s 2024 research found that 60% of newly created phishing domains receive a TLS certificate within the first two hours of registration. The site is credentialed, hosted, and ready before most security teams have any signal it exists. Once a campaign goes live, the window is short: the USENIX ‘Sunrise to Sunset’ study found the average phishing campaign spans just 21 hours between first and last victim visit. Credentials are harvested and accounts are compromised, often before a single user report reaches the SOC.

This is the detection gap. Most organizations aren’t closing it.

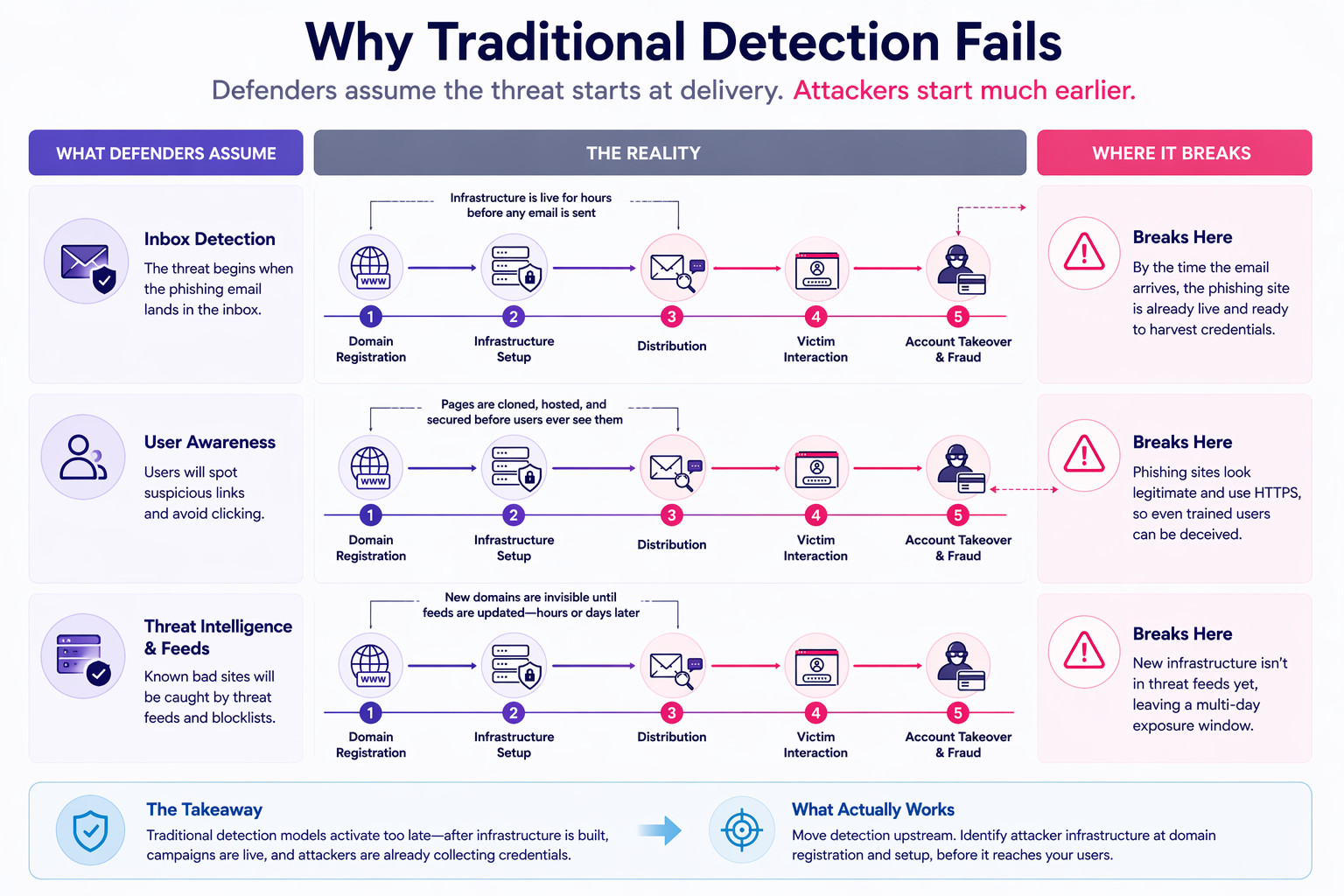

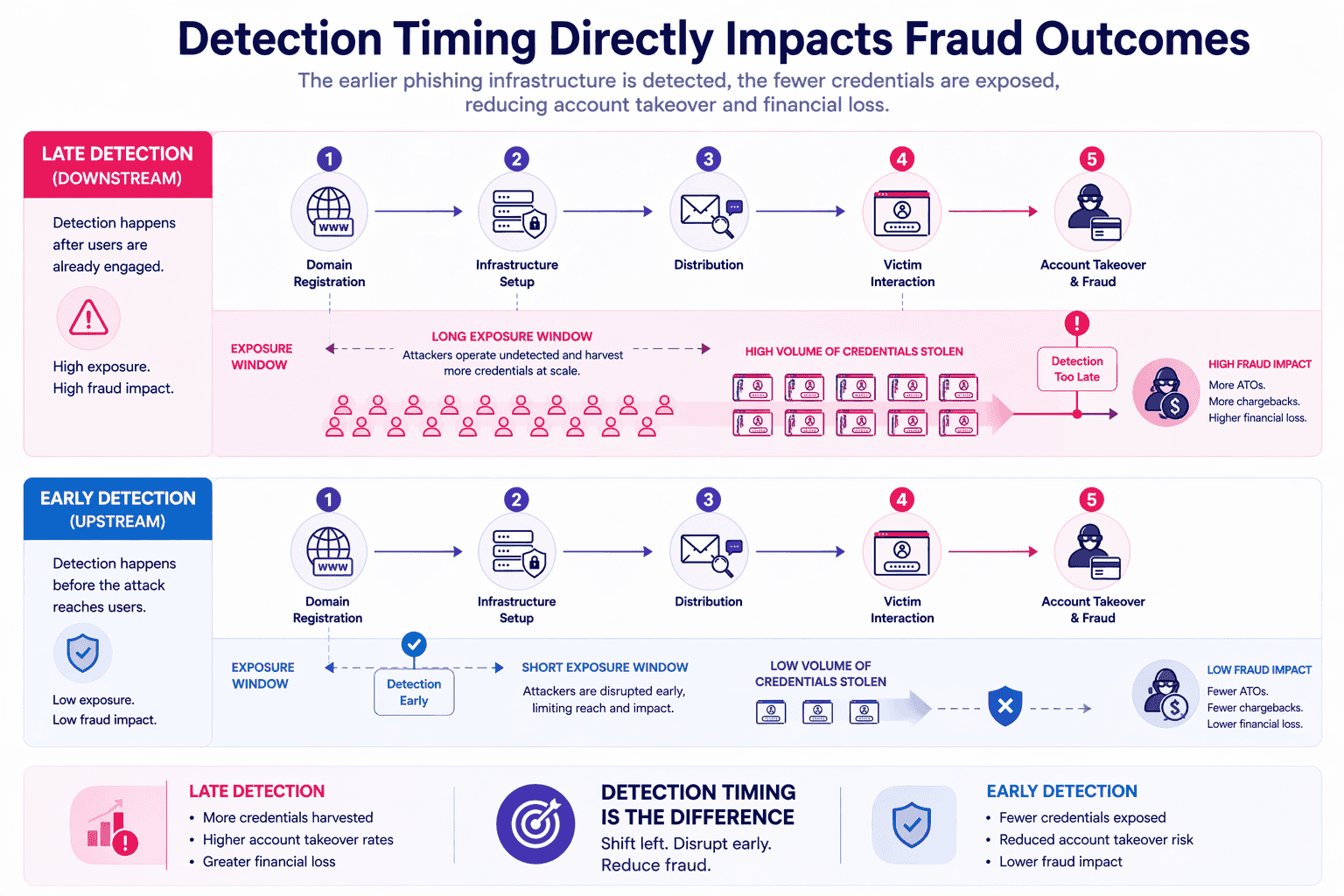

Traditional phishing detection is built around the inbox. Email filtering, user reporting, and post-delivery analysis are all valuable controls, but they operate downstream of the attack. By the time those signals fire, the phishing infrastructure is already deployed and active. For impersonation-driven campaigns targeting your brand, your customers, and your digital channels, that timing gap is the exposure window attackers rely on.

This guide is written for Heads of Security, Digital Risk Leads, Fraud Prevention Leaders, CISOs, SOC Managers, and Threat Intelligence Leads at mid-to-large enterprises who own the detection capability that sits upstream of the inbox.

The core argument is straightforward: phishing detection must be reframed as a lifecycle problem, not a user-behavior problem. The detection opportunity exists before distribution, at the domain registration, infrastructure configuration, and brand abuse signal layer. That’s where exposure can be reduced and where the detection window is widest.

This guide covers:

- Why inbox-level detection is necessary but structurally late

- A five-stage lifecycle model for impersonation-driven phishing campaigns

- Why security awareness training can’t carry the detection load alone

- Six upstream methods to detect phishing before users are compromised

- How early detection connects to measurable fraud reduction

- A practical framework for measuring detection maturity

If your current detection posture starts at the inbox, this guide starts earlier.

Why most phishing detection happens too late

Most enterprise phishing detection is built around the inbox. That’s a structural problem.

Email security gateways, post-delivery sandboxing, user reporting buttons – these are all valuable controls. But they share a common flaw: they’re triggered by delivery. By the time a phishing email is flagged, the attacker’s infrastructure is already live, already serving victims, and already harvesting credentials.

The numbers are concrete. According to the IBM Cost of a Data Breach Report 2025, phishing is the most frequent initial attack vector, responsible for 16% of breaches and averaging $4.8 million in breach costs per incident. The Verizon 2025 Data Breach Investigations Report places phishing firmly in the top tier of enterprise risk. These aren’t edge cases. They’re the baseline.

Yet most detection capability is concentrated at the point of delivery, not the point of setup.

Traditional detection relies on reactive signals. A user reports a suspicious link. A threat feed gets updated. A blocklist propagates. Each step takes time, and attackers don’t wait. A phishing domain can be registered, configured with a convincing lookalike page, and actively harvesting credentials for hours before it appears in any blocklist or threat intelligence feed. Research published in 2025 found that phishing domains remain accessible for an average of 11.5 days after detection. The exposure window isn’t measured in minutes. It’s measured in days.

Blocklist propagation delays compound this further. Even after a domain is flagged, it takes time for that signal to reach every email gateway, browser filter, and DNS resolver in your environment. During that gap, your customers are exposed.

Inbox-level controls aren’t useless. They reduce impact at the delivery stage and remain a necessary layer in any mature security posture. But they can’t be the primary detection layer when attack infrastructure is built and operational well before the first email is sent.

The detection gap isn’t a technology failure. It’s a timing problem. Closing it requires moving detection upstream, before distribution, not after delivery.

The phishing lifecycle model for impersonation-driven campaigns

Not all phishing follows the same playbook. The model below applies specifically to impersonation-driven phishing, campaigns where attackers clone a brand’s identity to deceive victims into surrendering credentials. This is the pattern most relevant to financial services, eCommerce, airlines, and telcos.

The core principle: the earlier you detect activity in this lifecycle, the smaller your exposure window. Stages 1 and 2 are where that window is widest, and where most organizations are currently blind.

| Stage | What Happens | Detection Opportunity | Exposure Risk |

| 1. Domain Registration & Lookalike Setup | Attacker registers a typosquatted, homoglyph, or combosquatted domain designed to impersonate your brand | High | Low |

| 2. Infrastructure Configuration | Hosting provisioned, TLS certificate issued, DNS configured, phishing kit or cloned page deployed | High | Low |

| 3. Distribution | Campaign launched via email, SMS, SEO poisoning, social media, or paid search | Medium | Medium |

| 4. Victim Interaction & Credential Harvesting | Users land on the phishing page and enter credentials | Low | High |

| 5. Account Takeover & Fraud | Harvested credentials used for ATO, financial fraud, lateral movement, or sold on dark web markets | Very Low | Very High |

- Stage 1: Domain registration and lookalike setup

The attack begins before any user sees a single email. Attackers register domains engineered to pass a quick visual scan, transposing letters, substituting homoglyphs, or appending brand-adjacent terms. Zscaler ThreatLabz analyzed over 30,000 lookalike domains targeting 500 major brands between February and July 2024, identifying more than 10,000 as confirmed malicious. This is the earliest and cleanest detection point in the lifecycle.

- Stage 2: Infrastructure configuration

Once the domain is live, attackers move fast. Hosting is provisioned, DNS records are configured, and a phishing kit or cloned page is deployed. TLS certificates are issued almost immediately. Zimperium found that 60% of new phishing domains receive an SSL certificate within two hours of registration. That HTTPS padlock gives the page a veneer of legitimacy before any victim has visited it. Stages 1 and 2 together represent the pre-distribution detection window, the point where disruption costs the attacker the most and costs you the least.

- Stage 3: Distribution

The campaign goes live. Phishing URLs are distributed via email, SMS, SEO-poisoned search results, social media posts, or paid advertising. This is the stage where most traditional detection tools, email filters, URL scanners, and threat feeds, first encounter the threat. By this point, the infrastructure has been operational for hours.

- Stage 4: Victim interaction and credential harvesting

Users land on the phishing page and enter their credentials. Detection at this stage reduces individual impact but can’t undo the exposure already created. The USENIX “Sunrise to Sunset” study found the average phishing attack spans 21 hours between the first and last victim visit, a wide window when detection is reactive.

- Stage 5: Account takeover and fraud

Harvested credentials are used for account takeover, financial fraud, or lateral movement, or sold on dark web markets. At this stage, the damage is done. Detection here means investigation, reimbursement, and remediation, not prevention.

The lifecycle makes the strategic case clearly: inbox-level detection is downstream, not upstream. For impersonation-driven campaigns, the pre-distribution window at Stages 1 and 2 is where detection has the highest leverage and the lowest cost.

Stage 1: Domain registration and lookalike setup

Domain registration is where impersonation-driven phishing campaigns begin, and where the earliest detection signal exists.

Attackers use three primary techniques to construct convincing lookalike domains:

- Typosquatting: character substitution, missing or extra letters, adjacent-key errors, and TLD variations (e.g., `paypa1.com`, `paypal.co`)

- Homoglyph attacks: Cyrillic or Unicode characters that visually mimic Latin letters, making a domain appear identical to the real brand at a glance

- Combosquatting: appending brand-adjacent words like `-security`, `-verify`, or `-login` to create domains that feel contextually legitimate (e.g., `bankname-login.com`)

These aren’t crude fakes. They’re engineered to pass a quick visual scan.

According to Zscaler ThreatLabz, GoDaddy hosts 21.7% of typosquatting and brand impersonation domains, with NameCheap accounting for a further 7.3%. The `.com` TLD dominates at 39.4% of phishing domains, precisely because its familiarity reduces user suspicion.

This stage is the earliest detectable signal in the impersonation lifecycle. No phishing email has been sent. No victim has clicked anything. The infrastructure doesn’t yet exist. But the domain does, and that registration event is observable through continuous monitoring of newly registered domains for brand-targeting patterns.

Detecting at this stage means disrupting a campaign before it causes harm.

Stage 2: Infrastructure configuration

The green padlock no longer means what users think it does.

Once a lookalike domain is registered, attackers move fast. Hosting is provisioned, often on shared or cloud infrastructure with minimal identity verification. DNS records are configured. A phishing kit or cloned brand page is deployed. A TLS certificate is issued, typically within minutes, through an automated certificate authority.

Let’s Encrypt is the CA of choice. Zscaler ThreatLabz found that 48.4% of analyzed phishing domains carried Let’s Encrypt certificates. A 2026 arXiv TLS analysis of domain datasets found Let’s Encrypt issues 87.6% of certificates in the unpopular domain set, a category that closely mirrors phishing domain behavior. Free, automated, and frictionless, it’s the obvious choice for attackers who need to look legitimate fast.

The padlock signals encryption, not trustworthiness. That distinction matters.

Each of these setup steps leaves detectable signals. TLS issuance patterns, hosting provider choices, and DNS configuration sequences are all observable before any campaign distribution begins. For security teams monitoring at this layer, infrastructure setup is a detection opportunity, not a forensic artifact.

Stages 3–5: Distribution, Harvesting, and Fraud

This is where most traditional detection tools first encounter the threat. By that point, the attacker is already ahead.

- Stage 3: Distribution. The campaign goes live across email, SMS, SEO poisoning, social media, and paid search ads. Phishing pages are indexed, links are circulating, and the infrastructure built in Stages 1 and 2 is fully operational. Email filters and threat intelligence feeds start picking up signals here, but the setup work is done.

- Stage 4: Victim interaction and credential harvesting. Users land on the phishing page. According to USENIX research, a typical phishing attack spans 21 hours between the first and last victim visit, and over 37% of victim traffic occurs after the attack is detected. That’s a narrow, brutal window.

- Stage 5: Account takeover and fraud. Harvested credentials fuel account takeover, financial fraud, or are sold on criminal marketplaces. Javelin Strategy & Research and AARP found that account takeover fraud resulted in nearly $13 billion in losses in 2023 alone.

By Stage 3, the detection window has closed to a crack. By Stages 4 and 5, exposure has already occurred. The damage isn’t theoretical. It’s accumulating.

Why user awareness alone is not a sufficient detection layer

Security awareness training works. That’s not the debate.

KnowBe4’s 2025 Phishing by Industry Benchmarking Report, analyzing 67.7 million phishing simulations across 14.5 million users, found that training reduces phishing click rates by 86% over 12 months. That’s a meaningful, measurable outcome. No security leader should walk away from that investment.

But the structural problem remains: even an 86% reduction leaves a residual exposure window. At enterprise scale, that window is costly.

If your organization has 10,000 employees and a baseline click rate of 33%, even after 12 months of training, roughly 460 people remain susceptible. Each one is a potential entry point. And the phishing infrastructure targeting them doesn’t pause while your training program matures.

Three structural limitations explain why awareness training can’t carry the detection load alone:

- 1. Human detection breaks down under pressure.

The median time for an employee to click a malicious link is 21 seconds, according to Verizon’s DBIR analysis. Under cognitive load, time pressure, or a convincing pretext, even well-trained users make mistakes. Training improves averages. It doesn’t eliminate tail risk.

- 2. Sophisticated impersonation is engineered to defeat visual inspection.

Pixel-perfect clones of banking portals, airline loyalty programs, and eCommerce checkout flows aren’t built to look suspicious. They’re built to look identical. A trained user comparing a cloned site to the real thing, same logo, same layout, same SSL padlock, has no reliable visual signal to act on.

- 3. Delivery-stage detection doesn’t neutralize the infrastructure.

Even when email filters catch 95% of phishing messages and trained users avoid clicking, the phishing infrastructure stays live. It keeps operating across SMS, SEO-poisoned search results, and social media, channels that sit entirely outside the inbox-level detection model. Stopping the email doesn’t stop the campaign.

This is the core finding that a 2025 Purdue University study published on arXiv reinforces: across 12,511 participants at a financial technology firm, anti-phishing training showed no statistically significant improvement in click rates or reporting behavior, regardless of training modality.

Awareness training is a necessary layer. It reduces human risk at the delivery stage, and that matters. But it operates downstream of where impersonation-driven phishing campaigns are built and deployed. Relying on it as the primary detection mechanism means your first signal of an active campaign is a user who already clicked.

6 proven methods to detect phishing before user compromise

The six methods below operate at the infrastructure and brand abuse signal layer, upstream of the inbox, upstream of the endpoint. They’re designed for impersonation-driven campaigns: the kind where attackers register lookalike domains, clone branded login pages, and distribute credential-harvesting links across email, SMS, and social channels.

These methods won’t catch every phishing variant. But for brand-targeted attacks, they create a detection window before widespread victim interaction begins. According to the APWG, financial services and SaaS platforms account for over 40% of phishing targets, sectors where impersonation-driven campaigns are the dominant attack pattern.

Each method below answers one question: how does this surface phishing activity before users are compromised?

Method 1: Monitor newly registered lookalike domains for brand-targeting patterns

By the time a lookalike domain appears in a threat feed, it may already be live and harvesting credentials. Continuous monitoring of newly registered domains closes that gap at the source.

This method works by ingesting certificate transparency logs, TLD zone file data, and domain registration APIs in near real-time. Detection logic applies fuzzy string matching against known brand name variations, flags typosquatting patterns (character substitution, homoglyphs, combosquatting), and surfaces domains that pair brand trademarks with suspicious keywords like `-verify`, `-secure`, or `-login`.

The scale of the problem makes this non-negotiable. Zscaler ThreatLabz analyzed over 30,000 lookalike domains targeting 500 major brands in a six-month window in 2024 and confirmed more than 10,000 as malicious. That’s roughly 55 new malicious lookalike domains per day.

The detection approach is also research-validated. The BadDomains system (NASK/CERT Polska, Sensors, 2026) demonstrated that machine learning models trained on registry data can identify phishing domains at registration time, before they’re weaponized and distributed.

This is Stage 1 of the impersonation lifecycle. Detecting here gives your team hours, not minutes, to act before any user is exposed.

Method 2: Track brand impersonation signals and cloned digital assets

Attackers don’t build phishing pages from scratch. They clone yours.

Login portals, checkout flows, and customer support pages are copied pixel-by-pixel and redeployed on attacker-controlled domains. Mimecast research shows brand impersonation attacks have surged over 360% since 2020. For financial services, eCommerce, airlines, and hospitality brands, whose interfaces are high-recognition targets, that exposure is acute.

Detection at this stage focuses on identifying structural and visual matches between suspected pages and known brand assets:

- Pixel-level visual similarity analysis comparing suspected pages against authenticated brand templates

- Copied HTML and CSS structure detection, which surfaces kit-based clones even when visual elements are partially altered

- Logo, color scheme, and UI pattern recognition on non-brand-owned domains

- Phishing kit deployment pattern monitoring, which identifies reused code signatures across multiple campaigns

This method operates at Stage 2 of the phishing lifecycle, after infrastructure is configured but before distribution begins. A cloned page detected before the first phishing email is sent eliminates the exposure window entirely, rather than shrinking it after the fact.

Method 3: Analyze Hosting and TLS Certificate Patterns Associated with Impersonation Infrastructure

A TLS padlock doesn’t mean a site is safe. It means someone paid nothing to get a certificate, and attackers know it.

Certificate transparency (CT) logs, including the publicly searchable crt.sh, record every TLS certificate issued by trusted certificate authorities in near-real-time. Monitoring these logs for certificates issued to domains containing your brand keywords surfaces impersonation infrastructure at Stage 2 of the phishing lifecycle, before distribution begins.

The speed matters. Zimperium’s 2024 phishing chronology research found that 60% of newly registered phishing domains receive a TLS certificate within two hours of registration. That’s a fully operational, HTTPS-enabled phishing site before most detection tools have indexed the domain.

Additional signals to monitor alongside CT logs:

- Hosting provider and ASN clustering – phishing infrastructure frequently concentrates on low-verification hosting providers with minimal identity checks

- Co-hosting patterns – multiple brand-targeting domains sharing the same IP or hosting account indicate coordinated campaign infrastructure

- Rapid certificate issuance – bulk cert requests across brand-adjacent domains in a short window signal active campaign setup

HTTPS is not a trust indicator. Zscaler ThreatLabz found that 48.4% of brand impersonation phishing domains used Let’s Encrypt certificates – free, automated, and requiring no identity verification. This method detects configured infrastructure before any victim interaction occurs.

Method 4: Detect SEO Poisoning and Search-Distributed Phishing Pages

Email filters can’t catch what never arrives in an inbox.

SEO poisoning flips the phishing model. Instead of pushing malicious content to victims, attackers manipulate search rankings to pull high-intent users directly to credential-harvesting pages. Someone searching for their bank’s login page, a customer service number, or a product portal is already primed to trust what they find. That’s what makes this channel dangerous.

In January 2026, Fortra’s Intelligence and Research Experts uncovered HaxorSEO, a marketplace selling backlinks to compromised legitimate domains for as little as $6 per listing. In documented cases, fraudulent banking login pages ranked above the legitimate institutions they were impersonating, as reported by Infosecurity Magazine.

Detection requires active search monitoring, not passive inbox filtering:

- Monitor search results for brand-related queries: brand name, product names, login-related terms, and customer service phrases

- Flag non-brand-owned pages appearing in top organic or paid positions for those queries

- Analyze ranking page structure and content for impersonation signals

- Monitor paid search placements for brand term hijacking

This method operates at Stage 3 of the phishing lifecycle, but consistent monitoring surfaces malicious infrastructure before victim volume accumulates at scale.

Method 5: Correlate Phishing Indicators Across Channels – Email, SMS, Social, Search

Modern impersonation campaigns don’t pick a single channel. The same phishing infrastructure is often distributed simultaneously via email, SMS, social media, and search poisoning. Scope your detection to email headers alone, and you’re seeing one thread of a much larger operation.

Cross-channel correlation changes that. The approach aggregates indicators of compromise (IOCs) across email headers, SMS sender IDs, social media account activity, and search monitoring, then identifies shared infrastructure signals. The same domain, IP block, or phishing kit appearing across multiple channels maps the full campaign scope, not just the slice that hit your inbox.

The SMS vector is increasingly dangerous. According to the Verizon 2025 Mobile Security Index, 77% of organizations believe AI-assisted SMS phishing attacks are likely to succeed. Smishing campaigns are harder for users to scrutinize and easier for attackers to scale.

Correlating IOCs across channels elevates detection from a single-signal event to a campaign-level intelligence picture. When you can see the full distribution footprint, takedown and disruption actions become faster and more targeted.

Method 6: Identify Credential-Harvesting Page Patterns Through Structural and Behavioral Signals

Even the most convincing phishing page leaves fingerprints.

Credential-harvesting pages consistently exhibit structural and behavioral anomalies that distinguish them from legitimate login pages, regardless of how polished the visual clone appears. Key detection signals include:

- Form action URLs pointing off-brand: legitimate login forms POST credentials to brand-owned endpoints. Harvesting pages route submissions to external domains, a pattern well-documented in modern phishing kits like Tycoon 2FA, which use reverse-proxy infrastructure to relay credentials in real time

- Absent or misconfigured security headers: pages impersonating brand-owned properties routinely lack HSTS, Content Security Policy, and X-Frame-Options headers that legitimate sites enforce

- Phishing kit fingerprints: known kit structures, hardcoded error strings, and predictable redirect patterns after form submission are identifiable across campaigns sharing the same kit lineage

- Post-submission redirect behavior: pages that redirect immediately after credential entry, rather than returning a session, signal exfiltration in progress

This method applies both proactively, scanning suspicious domains surfaced through domain and infrastructure monitoring, and reactively, analyzing reported URLs. It operates primarily at Stages 2 and 3 of the phishing lifecycle, surfacing harvesting infrastructure before significant victim volume accumulates.

Connecting Early Phishing Detection to Downstream Fraud Reduction

Phishing isn’t just a security problem. It’s the opening move in a fraud chain that ends in real financial loss.

The sequence is direct: impersonation-driven phishing captures credentials, those credentials enable account takeover (ATO), and ATO translates into financial fraud, customer disputes, and churn.

According to the IBM Cost of a Data Breach Report 2025, phishing-initiated breaches cost organizations an average of $4.8 million per incident. That’s not a worst-case figure, it’s the average. Downstream, Javelin Strategy & Research reported that ATO fraud losses reached $15.6 billion in 2024, up from nearly $13 billion the year prior. SpyCloud confirmed ATO attacks increased 24% year-over-year in 2024, with no sign of reversing.

For financial services, eCommerce, airlines, hospitality, and telcos, where customer accounts hold loyalty points, stored payment methods, and personal data, a single compromised credential can trigger cascading fraud across multiple systems.

The strategic implication is straightforward: every hour you reduce the gap between domain registration and detection is an hour fewer credentials can be harvested and weaponized. Shrink the detection window, and you shrink the fraud exposure pool.

Organizations that identify phishing infrastructure before distribution interrupt the fraud chain before it reaches victims. That means fewer compromised accounts, lower remediation costs, and less customer churn driven by eroded trust. For security and fraud leaders in high-value consumer sectors, this is a measurable reduction in fraud losses tied directly to detection timing.

Memcyco’s Digital Impersonation monitoring operates at precisely this upstream stage, surfacing brand abuse and lookalike infrastructure before credentials are at risk. For teams focused on the downstream impact of account takeover, that early-stage visibility is where fraud reduction actually begins.

Measuring Early Phishing Detection Effectiveness

You can’t improve what you can’t measure. For security and fraud leaders building a case for upstream detection investment, the right metrics separate a credible posture from a gut feeling.

Think of these four metrics as your detection maturity scorecard. Each is measurable, reportable, and tied directly to exposure reduction.

- 1. Time-to-Detection from Domain Registration (TTD-DR)

This measures elapsed time between a lookalike domain’s registration and your first detection signal. It’s the most direct indicator of how early your monitoring fires. Research published in Sensors (NASK/CERT Polska, 2026) found that it typically takes around five days from domain registration to detection, covering over 80% of phishing cases. A mature detection posture targets TTD-DR under 24 hours for high-confidence impersonation signals. Anything beyond 48 hours is an open window for attackers.

- 2. Time-to-Disruption from Infrastructure Setup (TTD-IS)

This tracks elapsed time between confirmed phishing infrastructure going live, including TLS certificate issuance, page deployment, and DNS propagation, and a successful disruption action: takedown request submitted, blocklist updated, or internal alert issued. TTD-IS captures operational response speed, not just detection. A low TTD-DR paired with a high TTD-IS signals a detection-response gap attackers will exploit.

- 3. Pre-Compromise Detection Rate (PCDR)

This is the percentage of phishing incidents identified before any confirmed victim interaction. Calculate it as:

- (Phishing incidents detected at Stages 1-2) / (Total phishing incidents identified) × 100

A mature posture targets PCDR above 50% for impersonation-driven campaigns. If most detections come from user reports or post-delivery signals, your PCDR will show it, and give you a concrete number to improve against.

- 4. Reduction in successful credential harvesting events

Track confirmed credential submissions to phishing infrastructure over rolling 90-day periods. Source this from threat intelligence feeds, dark web monitoring, or customer-reported account compromise events. A downward trend here is the clearest signal that upstream detection is cutting real fraud losses.

- Secondary operational metrics worth tracking:

- Number of lookalike domains identified per month

- Average time from detection to takedown completion

- Percentage of phishing campaigns detected before the first victim report

One caveat: baseline metrics vary by industry, brand exposure, and existing detection maturity. A financial services firm with high brand visibility will see different volumes than a regional telco. Establish your own baseline before benchmarking against industry averages. The goal is directional improvement, not hitting an arbitrary number.

These metrics give you a language for communicating detection posture to leadership, shifting the conversation from “we have phishing defenses” to “here’s how early we’re catching threats, and here’s how that’s trending.”

Building a Detection Maturity Model: From Reactive to Proactive

Where does your organization sit on the detection spectrum? Most security leaders have a gut feeling but no structured vocabulary to communicate it internally or measure progress over time. This three-level model gives you both.

- Level 1 – Reactive

Detection occurs primarily at Stages 3-5 of the phishing lifecycle: after distribution, during victim interaction, or post-compromise. Primary inputs are user reports, threat feed updates, and post-delivery email filtering. Pre-compromise detection rate (PCDR) sits below 10%. Time-to-detect-and-respond (TTD-DR) is measured in days to weeks. Research from CAIDA found a median detection delay of 16.3 days for malicious domains, with phishing infrastructure remaining accessible for an average of 11.5 days after detection. The core gap: no upstream visibility into domain registration or infrastructure signals.

- Level 2 – Informed

The organization monitors certificate transparency logs and domain registration feeds for brand-targeting patterns. Takedown workflows exist but are largely manual and triggered by discrete alerts. PCDR typically reaches 20-40%. TTD-DR improves to hours or days for known patterns. The primary gap: monitoring is point-in-time rather than continuous, and cross-channel correlation across email, SMS, social, and search is limited or absent.

- Level 3 – Proactive

Continuous monitoring spans domain registration, infrastructure configuration, and multi-channel distribution signals. Cross-channel IOC correlation is automated. Defined response playbooks cut TTD-DR to under four hours for impersonation-driven campaigns. PCDR exceeds 50% for this campaign type. Detection happens before widespread victim interaction, not after.

The gap between Level 1 and Level 3 isn’t just operational. It’s financial. Every day a phishing site runs undetected, credentials are harvested and accounts are at risk.

Use these levels to benchmark your current posture, identify the highest-leverage gap to close, and frame the investment case for upstream detection capability.

From Detection Timing to Proactive Impersonation Monitoring

If phishing detection starts at the inbox, the infrastructure is already live. The campaign may be running across email, SMS, and search simultaneously. Some victims may have already submitted their credentials.

For impersonation-driven campaigns, the real detection window is upstream: at domain registration, infrastructure configuration, and brand abuse signal stages. Organizations that build detection capability at these earlier lifecycle stages compress their exposure window, reduce credential harvesting volume, and cut the downstream fraud and remediation costs that follow.

That’s not a theoretical benefit. It’s the difference between disrupting an attack before it scales and managing the fallout after it does.

The next step is a practical one: assess where your detection capability currently sits in the lifecycle, and whether it reaches far enough upstream to matter.

- Explore early-stage impersonation monitoring: Memcyco’s Digital Impersonation solution uses PoSA technology to infiltrate live phishing sites, disrupt attacker workflows in real time, and surface brand abuse signals before widespread victim interaction occurs.

- See how upstream detection connects to ATO prevention: Memcyco’s Account Takeover solution shows how closing the detection timing gap directly reduces credential theft and account compromise rates.

Conclusion

Phishing detection that starts at the inbox is already late. The six methods covered here, from lookalike domain monitoring to credential-harvesting page analysis, target the stages where disruption is still possible. Compressing the window between infrastructure setup and detection is the most direct lever available for reducing credential theft and downstream fraud.

If Detection Starts at the Inbox, You’re Already Behind

Phishing infrastructure is live hours before the first email is sent. Memcyco’s platform detects impersonation signals at the domain, infrastructure, and brand abuse layer – before your customers are exposed. See how early-stage impersonation monitoring closes the detection timing gap.

Explore Impersonation Monitoring

FAQs

Q: At what stage of a phishing attack can organizations realistically detect it before users are compromised?

A: For impersonation-driven phishing campaigns, the earliest detectable signals appear at Stage 1 (domain registration) and Stage 2 (infrastructure configuration — TLS issuance, hosting setup, page deployment). Research from NASK/CERT Polska (2026) demonstrates that machine learning models trained on domain registry data can identify phishing domains at registration time, before they are weaponized. Certificate transparency logs surface TLS issuance for brand-targeting domains in near-real-time. Organizations with continuous monitoring at these stages can detect impersonation infrastructure within hours of setup — before any campaign distribution begins.

Q: How long does phishing infrastructure typically remain active before detection?

A: The average lifespan of a phishing website is approximately 54 hours (2.25 days), with a median of 5.46 hours, indicating that many sites are taken down quickly while others persist significantly longer (ACM 2025 analysis). The USENIX ‘Sunrise to Sunset’ study found the average phishing attack spans 21 hours between first and last victim visit. Critically, the average time between APWG and Google Safe Browsing detection is 223 hours (9.32 days) — meaning blocklist-dependent detection leaves a multi-day exposure window. This gap is precisely where upstream infrastructure monitoring provides the most value.

Q: Why isn’t email security filtering sufficient to stop phishing attacks?

A: Email security gateways and inbox-level filtering are valuable and necessary controls — but they operate at Stage 3 of the phishing lifecycle, after infrastructure is fully deployed. They also address only one distribution channel: email. Modern impersonation-driven campaigns distribute via SMS, SEO poisoning, social media, and paid search ads — channels that email security tools do not monitor. Additionally, even high-performing email filters leave a residual exposure: at enterprise scale, even a 1-5% click-through rate on missed phishing emails represents significant credential exposure risk. Upstream detection at the infrastructure layer complements, rather than replaces, delivery-stage controls.

Q: What is a lookalike domain and how do attackers use them in phishing campaigns?

A: A lookalike domain is a malicious domain registered to visually or phonetically mimic a legitimate brand’s domain, designed to deceive users into believing they are interacting with the real organization. Attackers create them using typosquatting (character substitution, missing letters, adjacent key errors), homoglyph attacks (Unicode characters that visually resemble Latin letters), TLD variations (.co vs .com), and combosquatting (adding brand-adjacent words like ‘-secure’, ‘-login’, ‘-verify’). Zscaler ThreatLabz 2024 research identified over 30,000 lookalike domains targeting 500 major brands, with more than 10,000 confirmed malicious. These domains are the foundation of impersonation-driven phishing campaigns.

Q: How should security leaders measure the effectiveness of their phishing detection program?

A: Four primary metrics provide a practical framework: (1) Time-to-Detection from Domain Registration (TTD-DR) — elapsed time between a lookalike domain’s registration and first detection signal; (2) Time-to-Disruption from Infrastructure Setup (TTD-IS) — elapsed time between confirmed phishing infrastructure configuration and successful disruption action; (3) Pre-Compromise Detection Rate (PCDR) — percentage of phishing incidents identified before any confirmed victim interaction; a mature posture targets >50% PCDR for impersonation-driven campaigns; (4) Reduction in Successful Credential Harvesting Events — tracked over rolling 90-day periods. These metrics enable security leaders to evaluate detection maturity and communicate posture improvement to executive stakeholders.

Q: What is the connection between phishing detection timing and account takeover fraud?

A: The connection is direct and quantifiable. Phishing is the primary initial access vector for credential theft, which enables account takeover (ATO), which results in financial fraud, customer churn, and remediation costs. Account takeover fraud resulted in nearly $13 billion in losses in 2023-2024 (AARP & Javelin Fraud Study), and ATO attacks increased 24% year-over-year in 2024 (SpyCloud). Every hour of reduction in the detection window — from domain registration to disruption — directly reduces the pool of credentials that can be harvested and weaponized for ATO. Early-stage phishing detection is therefore a direct lever for ATO fraud reduction, not just a security operations metric.